ChatGPT vs. Gemini vs. Claude for Traders: Which AI Should You Use?

You've heard the hype. ChatGPT can help you trade. Gemini has real-time data. Claude writes better code. Every platform claims to be "the best AI for traders."

So which one should you actually use?

This comparison examines three major AI chatbots across practical trading-research tasks, including earnings analysis, Pine Script debugging, and unfamiliar-stock research. It highlights where each platform is useful and where its limitations matter.

Here's what we learned: there's no single "best" AI for trading. But there is a best AI for your specific workflow. And by the end of this guide, you'll know exactly which one that is.

The Bottom Line: Quick Recommendations for Traders

If you're short on time, here's our verdict:

| Your Priority | Best Choice | Why |

|---|---|---|

| Real-time market research | Gemini | Native Google Search integration—fastest access to current news and prices |

| Analyzing earnings reports & SEC filings | Claude | Superior document analysis and nuanced reasoning |

| Writing trading code (Pine Script, Python) | Claude | Best-in-class coding benchmarks (77.2% on SWE-bench) |

| All-around assistant for varied tasks | ChatGPT | Most versatile, largest ecosystem, good at everything |

| Best free option | All three | Each offers capable free tiers—test them all |

The honest truth? Most serious traders end up using two or three of these tools for different tasks. We'll show you exactly how to do that later in this guide.

For a deeper dive into any single platform, our ChatGPT Day Trading Guide covers ChatGPT-specific workflows in detail.

What These AI Chatbots Can (and Can't) Do for Day Traders

Before we compare these tools, let's set realistic expectations. This matters—especially when your money is on the line.

What LLMs CAN Help With

Large language models like ChatGPT, Gemini, and Claude are genuinely useful for:

- Research and summarization — Digesting earnings reports, SEC filings, and market commentary

- Explaining complex concepts — Understanding options Greeks, market structure, or technical patterns

- Generating and debugging code — Writing Pine Script indicators, Python backtests, or Excel formulas

- Analyzing documents you upload — Finding specific details in 10-K filings or earnings transcripts

- Brainstorming strategy ideas — Exploring "what if" scenarios and testing your assumptions

What LLMs CANNOT Do

And here's the critical part—these are research assistants, not trading systems:

- They don't have real-time market data feeds — Even Gemini's web search isn't the same as a live Bloomberg terminal

- They can't execute trades — No API connection to your broker

- They sometimes hallucinate — Confidently stating incorrect facts, especially about specific prices or dates

- They're not financial advisors — Their training data includes both good and terrible advice

These limitations are why we categorize LLMs as "Level 4" tools in our 4-Level AI Framework—powerful for research and learning, but not for execution or real-time decisions.

Head-to-Head Comparison: The Specs That Matter to Traders

Let's cut through the marketing and look at what these platforms actually offer as of January 2026:

| Feature | ChatGPT (GPT-5.2) | Gemini 2.5 Pro | Claude Sonnet 4.5 |

|---|---|---|---|

| Context Window | 196K tokens | 1M tokens | 200K tokens (1M beta) |

| Real-Time Web Access | ✅ Yes (Browse) | ✅ Yes (Best) | ❌ No |

| Knowledge Cutoff | August 2025 | January 2025 | February 2025 |

| Image/Chart Analysis | ✅ Strong | ✅ Native multimodal | ✅ Strong |

| Coding Benchmark (SWE-bench) | 74.9% | 63.8% | 77.2% |

| Document Upload | ✅ Yes | ✅ Yes | ✅ Yes |

| Free Tier | ✅ GPT-5 (limited) | ✅ Flash (limited) | ✅ Sonnet (limited) |

| Paid Price | $20/month | $20/month | $20/month |

What These Specs Mean for Traders

Context window determines how much of a document the AI can "see" at once. A typical earnings transcript runs 15,000-20,000 tokens. A 10-K annual report? That's 60,000-80,000 tokens. All three can handle single documents, but if you want to compare multiple years of filings simultaneously, Gemini's 1M token window has a clear advantage.

Web access is the elephant in the room. Can the AI look up current information? Gemini does this natively through Google Search—fast and seamless. ChatGPT's Browse feature works but can be slower. Claude has no web access at all, which means you need to provide any current data yourself.

Coding benchmarks matter if you write Pine Script, Python backtests, or automated strategies. Claude Sonnet 4.5 currently leads with a 77.2% score on SWE-bench Verified—the industry standard for real-world coding tasks.

Real-Time Data Access: The Critical Difference for Traders

This deserves its own section because it's the biggest practical limitation for day traders.

The uncomfortable truth: No LLM has a direct feed to real-time market data. You cannot ask ChatGPT, Gemini, or Claude "What's AAPL trading at right now?" and get a reliable, instant answer the way you would from TradingView or your broker.

Here's how each platform handles current information:

Gemini: Best-in-Class for Current Information

Gemini has native Google Search integration. When you ask about recent events or current prices, it automatically searches and synthesizes results. For traders, this means:

- Quickly checking recent news on a ticker

- Finding earnings dates and analyst estimates

- Getting current price approximations (with some delay)

- Researching recent market events

The search happens seamlessly—you don't need to toggle anything on.

ChatGPT: Good, But Not as Seamless

ChatGPT's Browse feature enables web searches, but the experience varies. Sometimes it's fast and accurate. Other times it takes longer or returns outdated information. It works, but Gemini does it better.

Claude: No Web Access at All

Claude cannot access the internet. Period. If you need current information, you must either:

- Copy-paste the data into your prompt

- Upload a document containing the information

- Use another tool first, then bring the results to Claude

This sounds like a dealbreaker—and for real-time research, it is. But for deep document analysis where you provide the data, Claude's lack of web access is irrelevant.

The Trader's Workaround

Smart traders don't rely on any LLM for real-time data. Instead, they:

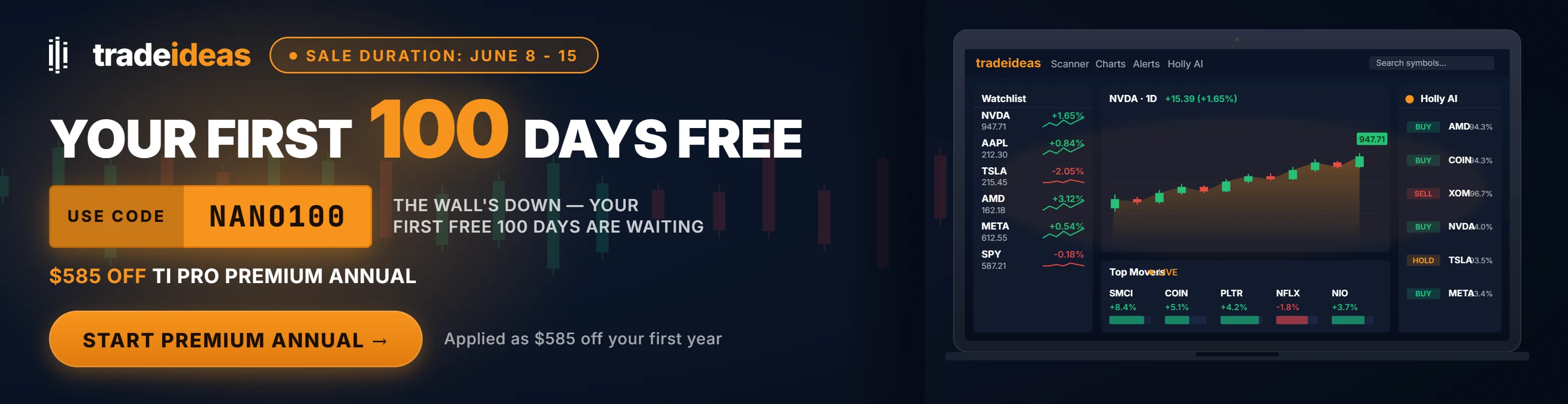

- 1Use a proper trading platform (TradingView, Trade Ideas) for live prices and scanning

- 2Use LLMs for analysis of data they've gathered

- 3Verify any AI-provided "facts" before acting on them

This is why tools like Trade Ideas remain essential—they provide what LLMs fundamentally cannot: real-time market scanning and actionable alerts.

Context Windows Explained: Why Size Matters for Financial Documents

"Context window" is tech jargon that traders need to understand. Think of it as the AI's working memory—how much text it can "see" and process at once.

Current Context Windows (January 2026)

| Model | Context Window | Rough Equivalent |

|---|---|---|

| GPT-5.2 (Thinking mode) | 196,000 tokens | ~150,000 words |

| Gemini 2.5 Pro | 1,000,000 tokens | ~750,000 words |

| Claude Sonnet 4.5 | 200,000 tokens (1M beta) | ~150,000 words |

What This Means in Practice

| Document Type | Approx. Tokens | Fits in ChatGPT? | Fits in Gemini? | Fits in Claude? |

|---|---|---|---|---|

| Single earnings call transcript | ~15,000 | ✅ | ✅ | ✅ |

| 10-K annual report | ~60,000-80,000 | ✅ | ✅ | ✅ |

| Multiple 10-Ks (3 years comparison) | ~200,000+ | ❌ | ✅ | ✅ (beta) |

| Entire code repository | ~500,000+ | ❌ | ✅ | ❌ |

The practical reality for most traders: Unless you're comparing multiple years of filings simultaneously or analyzing an entire codebase, all three platforms have sufficient context windows. The 1M token advantage only matters for very specific use cases.

Winner for long documents: Gemini (1M tokens standard) or Claude (1M beta for heavy users)

The 7 Trading Tasks: Which AI Wins Each One?

This is where the comparison gets practical. This guide evaluates each platform on documented use cases to see which performs best. Here's what we found.

Task 1: Researching a Stock You Don't Know

The scenario: Someone mentions a ticker in a chat room. You've never heard of it. You need a quick overview before market open.

Winner: Gemini

Gemini's native search integration shines here. Ask "What does PLTR do and why is it moving today?" and you get a synthesized answer drawing from current sources—company overview, recent news, analyst sentiment.

ChatGPT with Browse enabled works too, but it's slower and sometimes returns stale information. Claude can't help with current events at all unless you paste in the news yourself.

Task 2: Analyzing an Earnings Report

The scenario: A company just reported after hours. You want to understand the key numbers, management guidance, and any red flags.

Winner: Claude

This is Claude's sweet spot. Upload the earnings transcript or 10-K, and Claude provides nuanced, thorough analysis. It asks clarifying questions ("Do you want me to focus on revenue guidance or margin trends?"), identifies specific management language worth noting, and connects dots across different sections.

ChatGPT is strong here too—just slightly more verbose and less likely to probe deeper without prompting. Gemini handles it well but doesn't match Claude's analytical depth.

Task 3: Writing Pine Script for TradingView

The scenario: You want a custom indicator—say, an RSI divergence alert with volume confirmation.

Winner: Tie (Claude & ChatGPT)

Both produce clean, functional Pine Script v5 code. Claude tends to write more robust code with better edge-case handling. ChatGPT is more conversational about explaining what each line does.

Gemini works but occasionally uses outdated Pine Script syntax, requiring more debugging.

Critical note: Always test AI-generated code in TradingView's paper trading mode before using it live. Never trust any code—AI-generated or otherwise—with real money until you've verified it yourself.

Task 4: Debugging Python Backtesting Code

The scenario: Your backtest is throwing errors or producing unexpected results.

Winner: Claude

Claude Sonnet 4.5 leads the industry on coding benchmarks for a reason. It's exceptionally good at identifying bugs, understanding your intent, and suggesting fixes. The 77.2% SWE-bench score translates to real-world effectiveness.

ChatGPT GPT-5 is close behind (74.9% on SWE-bench) and perfectly capable. Gemini is the weakest of the three for complex debugging tasks.

Task 5: Understanding a Complex Trading Concept

The scenario: You want to understand implied volatility skew, order flow dynamics, or why the VIX and SPY sometimes move together.

Winner: ChatGPT (slight edge)

All three excel at education, but ChatGPT has a slight edge in conversational teaching. It uses more analogies, adjusts complexity based on follow-up questions, and maintains a friendly tone that makes learning easier.

Claude is equally knowledgeable but slightly more formal. Gemini can supplement explanations with current examples via search.

Task 6: Analyzing Your Trading Journal

The scenario: You've logged 50 trades this month. You want to identify patterns—when you perform best, what mistakes repeat, what emotions correlate with losses.

Winner: Claude

Journal analysis requires nuanced pattern recognition and psychological insight—Claude's wheelhouse. Upload your journal (CSV, text, whatever format), and Claude identifies patterns you might miss: "Your win rate drops significantly on Mondays," or "When you mention feeling 'anxious' in your notes, your position sizing increases by 40%."

For more on leveraging your journal, see our Trading Journal Psychology guide.

Task 7: Getting Current Market News and Sentiment

The scenario: You want a quick read on market sentiment before the open. What's the narrative today?

Winner: Gemini

This is Gemini's clearest victory. Native Google Search means it can scan recent headlines, synthesize analyst commentary, and give you a sentiment summary in seconds. ChatGPT with Browse enabled is a distant second. Claude simply can't compete here without web access.

Important caveat: Always verify news from primary sources. AI can summarize, but it can also miss nuance or conflate different stories.

Code Generation Showdown: Pine Script and Python for Traders

For technical traders who write their own indicators and backtests, code quality matters. Here's how the three platforms compare:

Coding Benchmark Scores (January 2026)

| Model | SWE-bench Verified | What It Means |

|---|---|---|

| Claude Sonnet 4.5 | 77.2% (82% with parallel compute) | Best-in-class for complex coding |

| ChatGPT GPT-5 | 74.9% | Excellent, close second |

| Gemini 2.5 Pro | 63.8% | Good, but trails the leaders |

Practical Test: RSI Divergence Indicator

We asked each AI to write a Pine Script v5 indicator that identifies RSI divergence with volume confirmation. Results:

| Criteria | ChatGPT | Gemini | Claude |

|---|---|---|---|

| Code runs without errors | ✅ | ✅ | ✅ |

| Follows Pine Script v5 syntax | ✅ | Mostly | ✅ |

| Includes helpful comments | ✅ | ✅ | ✅ |

| Handles edge cases | Good | Fair | Excellent |

| Code organization | Good | Fair | Excellent |

Our take:

- Claude produces the cleanest, most robust code with thoughtful error handling

- ChatGPT is excellent and better at explaining its choices conversationally

- Gemini works but occasionally uses deprecated syntax requiring manual fixes

Pro tip: When generating trading code, always:

- 1Test in paper trading mode first

- 2Review the logic manually—don't just trust it

- 3Backtest thoroughly before going live

Document Analysis: Earnings Reports, 10-Ks, and SEC Filings

Financial documents are dense, long, and full of jargon. Here's how each AI handles them:

Claude: The Document Analysis Champion

Claude excels at deep document analysis:

- Uploads entire 10-Ks without truncation

- Asks clarifying questions to focus the analysis

- Identifies specific risk factors, guidance changes, and management tone

- Maintains context across the entire document

- Produces nuanced, professional-grade analysis

ChatGPT: Strong, But More Verbose

ChatGPT handles documents well:

- Strong summarization capabilities

- Good at extracting specific metrics

- Sometimes summarizes too aggressively, losing nuance

- May lose context in very long documents

- More conversational output style

Gemini: Good, With a Unique Advantage

Gemini's document analysis:

- Handles large documents well (1M token context)

- Strong at pulling specific data points

- Can cross-reference uploaded documents with web search for additional context

- Less nuanced reasoning than Claude on complex analysis

Winner for Document Analysis: Claude (by a narrow margin over ChatGPT)

Hallucination Risk: Why Accuracy Matters in Finance

Let's address the uncomfortable reality: all LLMs sometimes generate confident-sounding wrong information. In finance, this can be costly.

Types of Financial Hallucinations We've Seen

- Inventing stock prices or dates — "AAPL closed at $182.50 on March 15" when that's incorrect

- Misattributing quotes — Claiming a CEO said something they didn't

- Creating plausible-sounding statistics — "Studies show 73% of traders..." with no actual source

- Mixing up company data — Confusing metrics between similar tickers

How Each Platform Handles Accuracy

| Model | Hallucination Improvement | Our Assessment |

|---|---|---|

| GPT-5.2 | 10.9% error rate (improved from 16.8% in GPT-5) | Good—verify important facts |

| Gemini 2.5 Pro | Improved, can verify via search | Good—but search isn't foolproof |

| Claude Sonnet 4.5 | Strong alignment, often asks for clarification | Good—still verify critical info |

According to OpenAI's system card, GPT-5 responses are approximately 45% less likely to contain factual errors than GPT-4o, and GPT-5.2 Thinking has reduced this further to a 10.9% hallucination rate on representative queries.

The Golden Rule

Never make a trading decision based solely on AI output.

Always verify:

- Specific prices, dates, and statistics

- Quotes attributed to executives

- Claims about company performance

- Any "fact" that would significantly affect your trade thesis

For a complete breakdown of AI risks in trading, see our guide on AI Trading Risks and Dangers.

Pricing Breakdown: Free vs. Paid for Traders

All three platforms offer free tiers that are genuinely useful. Here's what you get at each level:

| Feature | ChatGPT Free | Gemini Free | Claude Free |

|---|---|---|---|

| Model Access | GPT-5 (limited messages) | Gemini 2.5 Flash | Claude Sonnet 4.5 (limited) |

| Web Search | ✅ Yes | ✅ Yes | ❌ No |

| File Uploads | Limited | ✅ Yes | ✅ Yes |

| Message Limits | ~10 premium responses/5 hours | Generous limits | Rate limited |

| Good Enough for Trading Research? | ✅ For casual use | ✅ For research | ✅ For document analysis |

Free Tier Comparison

Paid Plans ($20/month each)

| Platform | Plan | What You Get |

|---|---|---|

| ChatGPT Plus | $20/mo | Full GPT-5.2, Thinking mode, higher limits, DALL-E, voice mode |

| Gemini Advanced | $20/mo | Full Gemini 2.5 Pro, 1M context, Google Workspace integration |

| Claude Pro | $20/mo | Higher message limits, priority access during peak times |

Our Recommendation

- 1Start free with all three — Test each on your actual workflows

- 2Pay for one based on your primary use case — Don't pay for all three unless you're using them constantly

- 3Consider value vs. time — If paying $20/month saves you an hour of research per week, that's excellent ROI

Most traders don't need all three paid subscriptions. Pick the one that matches your primary workflow and use the free tiers for occasional secondary tasks.

The Best AI Workflow for Day Traders

Rather than picking a single AI, many traders use each platform for its strengths. Here's a practical multi-tool approach:

Sample Morning Routine

Step 1: Market Scan with Gemini "What's the market sentiment this morning? Any major news affecting my watchlist (NVDA, AAPL, SPY)?"

Gemini's live search gives you a quick pulse on overnight developments, analyst upgrades/downgrades, and sector trends.

Step 2: Earnings Analysis with Claude Upload overnight earnings transcripts → "Summarize key guidance changes, management tone, and any red flags in this earnings call."

Claude's document analysis provides deeper insight than a headline skim.

Step 3: Strategy Brainstorm with ChatGPT "Given bullish tech sentiment and elevated VIX, what adjustments should I consider for my momentum strategy today?"

ChatGPT's conversational style makes it excellent for thinking through scenarios.

Step 4: Real Trading with Real Tools Use Trade Ideas for live scanning and alerts. Use TradingView for charting and execution.

LLMs are research assistants. Actual trading requires actual trading tools.

Cost Consideration

Using all three paid plans costs $60/month. For active traders, this is trivial compared to a single bad trade. But most can get by with one paid plan plus the free tiers of the others.

For the complete picture on building your trading stack, see our AI Day Trading Complete Guide.

Frequently Asked Questions

Which AI is best for stock trading—ChatGPT, Gemini, or Claude?

The right choice depends on what you do most. If you spend most of your time analyzing earnings reports and SEC filings, Claude's superior document handling makes it the clear winner. If you need to quickly research unfamiliar stocks and check current news, Gemini's native Google Search is unmatched. For general-purpose assistance across varied tasks, ChatGPT's versatility and ecosystem make it the safest choice.

Key Takeaway: Test all three on your actual workflows before committing to one. Most serious traders end up using multiple tools.

Can ChatGPT, Gemini, or Claude access real-time stock prices?

Here's the distinction that matters: Gemini can search Google for recent stock prices, but there's latency involved—you're getting the price from when someone last wrote about it online, not a live quote. ChatGPT's Browse feature similarly searches the web but isn't connected to market data providers. Claude has no web access at all.

Key Takeaway: For real-time prices, use TradingView or your broker. Use AI for research and analysis, not live data.

Is Claude better than ChatGPT for analyzing earnings reports?

Both are capable, but Claude's approach to document analysis feels more like working with a thoughtful analyst. It probes deeper, identifies subtle language changes in management commentary, and produces more nuanced summaries. ChatGPT is strong but tends to be more verbose and sometimes summarizes too aggressively.

Key Takeaway: For deep document analysis, Claude has a meaningful edge. For quick summaries, both work well.

Which AI chatbot is best for writing Pine Script or Python trading code?

Claude produces cleaner code with better edge-case handling—the kind of robustness that matters when your code is making trading decisions. ChatGPT is nearly as capable and better at explaining its coding choices conversationally. Gemini works but trails the leaders and occasionally uses outdated syntax.

Key Takeaway: Use Claude or ChatGPT for trading code. Always test thoroughly before using any AI-generated code with real money.

Which AI has the largest context window for financial document analysis?

For most trading use cases, all three have sufficient context. A single 10-K filing is about 60,000-80,000 tokens—all platforms handle this easily. The 1M token advantage only matters if you're comparing multiple years of filings simultaneously or analyzing entire codebases.

Key Takeaway: Context window size rarely matters for typical trading research. Don't choose based solely on this spec.

Are the free versions of these AI tools good enough for trading research?

ChatGPT free gives you access to GPT-5 with message limits. Gemini free provides the smaller Flash model with web search. Claude free offers Sonnet 4.5 with rate limits. For occasional research—checking on a stock before buying, understanding a concept, debugging simple code—free tiers work fine.

Key Takeaway: Start free with all three to find your preference. Upgrade when you consistently hit limits.

Can I upload a 10-K filing to Claude for analysis?

Simply upload the PDF or text file, and Claude will analyze it thoroughly. You can ask specific questions ("What are the top three risk factors?") or request comprehensive analysis. Claude excels at maintaining context across long documents and identifying nuanced details.

Key Takeaway: Claude is excellent for 10-K analysis. Upload the full document and ask targeted questions.

Which AI is least likely to hallucinate financial information?

OpenAI reports that GPT-5.2 Thinking responses have 30% fewer errors than GPT-5.1 Thinking. Anthropic has invested heavily in Claude's "alignment"—reducing confident wrong answers. Gemini's ability to search provides a natural fact-checking mechanism, though search results themselves can be wrong.

Key Takeaway: None are hallucination-proof. Always verify critical financial facts from primary sources before trading.

Should I pay for ChatGPT Plus, Gemini Advanced, or Claude Pro?

All three cost $20/month and all are excellent. The deciding factor is how you actually spend your time. If you're constantly researching stocks and checking news, Gemini's search integration delivers the most value. If you're analyzing filings and writing code, Claude's strengths align with your needs. If you need a general-purpose assistant for varied tasks, ChatGPT's ecosystem and versatility make it the safest bet.

Key Takeaway: Most traders don't need all three paid plans. Pick one based on your workflow and use free tiers for occasional secondary tasks.

Can any of these AI tools actually predict stock movements?

Let's be direct: these are language models trained on text, not crystal balls. They can help you research, analyze, and think through scenarios—but they cannot predict future prices any better than you can. Anyone selling an "AI trading bot" that promises to predict markets is either confused or scamming you.

Key Takeaway: Use AI for research and analysis. Predictions come from your own judgment based on that research. For more on distinguishing real AI tools from hype, see our guide on AI Trading Bots: Truth vs. Hype.

Our Verdict: Which AI for Which Trader?

After hundreds of hours testing these platforms on real trading tasks, here's our final take:

Choose ChatGPT (GPT-5.2) If You:

- Need an all-around assistant for varied tasks

- Want real-time web access AND strong coding

- Prefer one tool for everything

- Value the largest ecosystem (custom GPTs, plugins)

Choose Gemini 2.5 Pro If You:

- Prioritize current market information and news

- Work heavily with Google Workspace

- Need to analyze charts and visual data

- Want the largest context window (1M tokens)

Choose Claude Sonnet 4.5 If You:

- Focus on deep document analysis (10-Ks, earnings)

- Need the best coding assistance available

- Value nuanced, thoughtful reasoning

- Don't require real-time web access

The Real Answer

Most traders eventually use all three—Gemini for morning research, Claude for document analysis, ChatGPT for everything in between. The $60/month for all three paid plans is trivial compared to the cost of one bad trade made without proper research.

But if you're starting out, pick one based on your primary workflow. You can always add more later.

Disclaimer

The information provided in this article is for educational purposes only and should not be considered financial advice. Day trading involves substantial risk and is not suitable for every investor. Past performance is not indicative of future results.

AI tools discussed in this article can assist with research and analysis but cannot predict market movements or guarantee trading success. Always verify AI-generated information from primary sources before making trading decisions.

For our complete disclaimer, please visit: https://daytradingtoolkit.com/disclaimer/

Article Sources

- OpenAI — "Introducing GPT-5" (August 2025). Official announcement detailing GPT-5 capabilities, benchmarks, and hallucination improvements. https://openai.com/index/introducing-gpt-5/

- OpenAI — "Introducing GPT-5.2" (December 2025). Latest model specifications including 10.9% hallucination rate and improved reasoning. https://openai.com/index/introducing-gpt-5-2/

- Google DeepMind — "Gemini 2.5: Our newest Gemini model with thinking" (March 2025). Official Gemini 2.5 Pro specifications, 1M token context, and benchmark scores. https://blog.google/technology/google-deepmind/gemini-model-thinking-updates-march-2025/

- Anthropic — "Introducing Claude Sonnet 4.5" (September 2025). Official announcement of Claude Sonnet 4.5, SWE-bench scores (77.2%), and 30+ hour sustained operation. https://www.anthropic.com/news/claude-sonnet-4-5

- OpenAI — "Why Language Models Hallucinate" (September 2025). Research paper explaining hallucination causes and mitigation strategies. https://openai.com/index/why-language-models-hallucinate/

- SWE-bench — Industry-standard benchmark for evaluating real-world software engineering capabilities. Referenced for coding performance comparisons across models.

Was this helpful?

Written by

Kazi Mezanur RahmanFounder, independent researcher, and editor of DayTradingToolkit, a one-person publication focused on risk-first trading education, documented tool research, and clear explanations.

Keep Reading

Comparisons

The Best AI Tools for Day Traders (2026)

This guide evaluated every major AI trading tool in 2026. See which ones actually use real AI, how to build your trading stack, and what to skip.

Comparisons

The Ultimate Trade Ideas Comparison Guide (2025): 10 Alternatives Showdown

Comparisons

Trade Ideas vs. Scanz (2025): AI Intelligence vs. Raw Market Data

Compare Trade Ideas and Scanz for day traders—real-time scanning, alerts, news flow, simulation, features, pricing & why Trade Ideas is the better edge.

Comparisons

Trade Ideas vs. StockHero: Which "AI" Trading Tool Do You Actually Need?

This comparison breaks down Trade Ideas vs StockHero with a worked market example. Discover if you need an idea engine or an execution bot to win.

Comments

No comments yet. Be the first to share your thoughts.